Fifteen years in security operations teaches you that the real problem is rarely the attacker. It’s the gap between detection and decision.

01 – The Line Nobody Wants to Hold

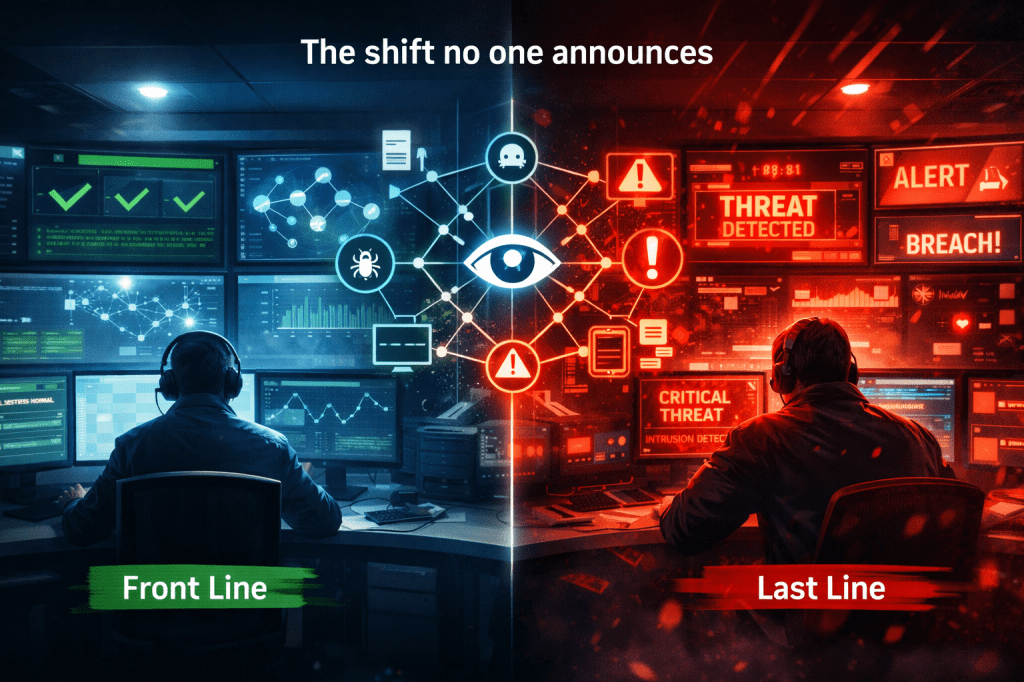

Front line. Last line. Same line.

People in this industry love to call the SOC the front line of defence. Sometimes in the same breath, they call it the last line. Both are true, and that tension tells you everything about what working in security operations actually feels like.

You are the first team to see the signal. You are also the last team standing between the business and a full breach. That is not a contradiction. It is the operational reality of running a SOC, and if you have not made peace with it, you are probably still looking for a process to fix it rather than the mindset to handle it.

The deeper truth is this: the question is never whether your SOC detected something. Detection, at some level, is a solved problem. The real question is what your team does in the next fifteen minutes after detection, under pressure, with incomplete information, and without a single senior person in the room who can approve the call.

Detection is not the hard part. Decision-making under pressure, with half the picture, is where SOCs quietly fail.

02 – Structure vs Reality

The org chart looks great. Until something actually happens.

Every mature SOC has a structure that makes sense on paper. Tier 1 analysts triage alerts. Tier 2 analysts investigate. Tier 3 handles escalation. Threat intelligence feeds into detection engineering. Incident response steps in when things get serious. It is clean, logical, and almost completely useless as a description of what happens during a real incident.

What actually happens is this. A Tier 1 analyst picks up something odd at 11:40 PM. It does not cleanly match any playbook. The alert confidence is medium. The asset involved is something that should not be doing what it appears to be doing, but the analyst has seen false positives on this detection before. They escalate. Tier 2 analyst on shift is handling two other alerts. They take a look, agree it is worth watching, and flag it for the morning team. The morning team arrives, reviews it, and decides it needs Tier 3 eyes. By the time someone with the authority and context to make a real decision is looking at the alert, it is eight hours old.

In that eight hours, a patient attacker has had time to establish persistence, move laterally, and start identifying what is worth exfiltrating. And your SOC did everything right, technically. The process worked exactly as designed. That is the problem.

03 – The Escalation Problem

Three gaps that will slow you down every time.

Most SOC leaders focus on detection coverage. They track mean time to detect, they tune correlation rules, they argue about SIEM licensing costs. These are real concerns, but they are not where incidents actually go wrong. Incidents go wrong in the space between detection and action, and that space has three consistent failure modes.

The first is escalation delay. Not because analysts are slow, but because the escalation path is designed for certainty. Analysts escalate when they are confident. But real incidents, especially the serious ones, arrive as low-confidence signals with ambiguous context. An analyst who is not empowered to act on a medium-confidence alert will wait for more evidence. The attacker does not wait with them.

The second is unclear ownership. During an incident that spans multiple systems, teams, and geographies, the question of who is leading the response can absorb twenty minutes of conversation on its own. I have seen this play out in situations where the answer was obvious in hindsight, but in the moment, three different teams were each waiting for someone else to take the lead before committing resources.

The third is over-reliance on validation. Analysts are trained to verify before they act. That discipline is right and necessary in normal operations. But during an active incident, waiting for a second confirmation can be the difference between containing a compromise and managing a breach notification. The instinct to validate is good. Making it a hard requirement at the wrong moment is not.

04 – Intelligence and Detection

Your threat intelligence team and your SOC are probably not talking enough.

Detection and threat intelligence should be the same conversation. In most organisations, they are two separate ones. The intelligence team produces reports, context, and adversary profiles. The SOC team monitors alerts, investigates events, and responds to incidents. The two streams of work run in parallel, and they inform each other far less than anyone will admit in a quarterly review.

The consequence is that your analysts are detecting signals without the context to understand what those signals mean. A login from an unusual geography might be a traveller. It might also be an initial access broker who spent six weeks building a convincing identity before using it. Without the intelligence context baked into the detection layer, the analyst sees an anomaly. With it, they might see the beginning of a campaign.

Static blacklists make this worse. A blacklist is a record of things that were bad yesterday. Sophisticated attackers rotate infrastructure on a timeline that makes static indicators close to worthless within days of publication. Blocking on a known-bad IP is the right call. Relying on that block as your primary defence against an adversary who already knows your tooling is not a strategy. It is a comfort blanket.

Pattern-based thinking changes the frame. Instead of asking whether an indicator matches a known-bad list, you ask whether a sequence of behaviours across multiple systems, over a period of time, matches a pattern consistent with attacker activity. That is a harder problem to automate, but it is the right problem to solve. A single alert might be noise. The same alert, appearing three times across different assets over seventy-two hours, is a pattern worth understanding.

A blacklist tells you what was dangerous last week. Pattern-based detection tells you what is happening right now.

05 – Platform Thinking

Connect the intelligence. Connect the incidents. Connect the patterns.

The operational model that works is one where intelligence, incident data, and behavioural patterns feed into each other continuously. Not as a product category, not as a vendor pitch, but as a way of thinking about how your SOC processes information.

When your threat intelligence team identifies a new adversary technique, that should translate within hours into updated detection logic. When your analysts close an incident, the artifacts from that investigation should feed back into the intelligence picture. When a pattern emerges across multiple low-severity alerts, your team should have a mechanism to surface it before it becomes a high-severity incident.

This is not a tech problem. Most mature SOCs already have the tools to support this kind of connected operation. It is a process problem, and underneath the process problem, it is a culture problem. It requires intelligence analysts who understand detection engineering, and detection engineers who read intelligence reports, and leadership that creates space for that conversation to happen routinely rather than only during a post-incident review.

Automation supports this model well, when the decision logic is clear. Automated enrichment of alerts with intelligence context, automated correlation of related events, automated notification when a pattern threshold is crossed. These are appropriate uses of automation. Automating the decision about whether to contain an asset when the confidence level is genuinely ambiguous is where automation creates more risk than it removes. The machine should inform the decision. The analyst should own it.

Metrics sit in the same position. Time to detect (TTD) and time to respond (TTR – sometimes not even recorded) are useful numbers. They become useful decisions only when you track them in context. A fifteen-minute mean time to respond looks excellent until you realise that your team is closing incidents quickly by escalating them to business-hours teams overnight, and those teams are not responding until morning. The number improved. The outcome did not.

Let’s take dive into some real scenarios.

06 – Supply Chain Attacks

Trusted systems behaving differently. Low confidence. High stakes.

Supply chain attacks are the category that keeps SOC leaders honest about the limits of perimeter-focused detection. The signal is not a known-bad IP or a malicious file hash. The signal is a trusted system doing something slightly unusual. A software update that phones home to a new endpoint. A service account making API calls it has never made before. A scheduled task that appeared on a system during a maintenance window and has been running quietly ever since.

None of these signals is individually alarming. Each one, in isolation, has some kind of benign explanation. That is precisely what makes supply chain attacks difficult. Your detection logic is optimised to surface anomalies. Supply chain attacks are designed to look like normal operational noise until the attacker is ready to act.

The response posture for this category of threat has to be different. You cannot wait for high-confidence indicators. By the time a supply chain compromise generates the kind of alert your Tier 1 analyst would escalate without hesitation, the attacker has typically been present for weeks or months. The detection has to happen at the pattern level, across longer timeframes, with lower confidence thresholds and a willingness to investigate signals that do not yet look like incidents.

That requires analysts who are empowered to spend time on low-confidence investigations, and leadership that measures the value of that time appropriately. Hunting on a signal that turns out to be nothing is not wasted effort. It is exactly what threat hunting is supposed to look like from the inside.

07 – Business Email Compromise

Third party. Delayed report. Incomplete visibility. Real decision.

Business Email Compromise cases are where all of the operational gaps covered above converge into a single, messy, time-pressured situation. Let me walk through how this actually plays out.

| Case reference — third-party BEC with delayed reporting Your finance team receives a message from a supplier contact whose email they have been using for two years. The message requests a change to banking details ahead of an upcoming payment. The request is formatted correctly, references the right invoice numbers, and comes from what appears to be a legitimate address. Your finance team flags it to procurement, who approves it and updates the payment details. The payment goes out four days later. Three weeks after that, your supplier contacts your accounts team asking where the payment is. By the time your SOC is informed, the incident is twenty-three days old. The attacker has long since moved the funds. The compromised mailbox at your supplier has probably been cleaned up. Your visibility into the supplier’s environment is, naturally, zero. What your team is left with is an email thread, a payment record, and a series of questions that cannot be fully answered. Was the supplier’s mailbox compromised, or was the attacker spoofing the domain? Were other suppliers targeted? Is the attacker still present somewhere in the communication chain? You do not know. You may never know. |

This is the reality of BEC investigation. The delayed reporting means the forensic trail is cold. The third-party nature of the compromise means your visibility is constrained by someone else’s security posture. The decision your team has to make is not whether to investigate, but how much resource to commit to an investigation where the recoverable value may be low and the actionable intelligence may be limited.

That is a judgment call. A mature SOC makes it based on risk to the broader organisation, not just the probability of recovering the funds. If this attacker has visibility into your supplier communications, they may also have information about other pending transactions, personnel details, or procurement timelines. Scoping the investigation to the payment event is the obvious call. It is also probably the wrong one.

08 – The Closing Case

Weak signal. No confirmation. Decision required.

In one case, we were monitoring a finance team’s email environment when a set of forwarding rules appeared on a senior account. The rules were subtle. They did not redirect all mail. They targeted messages containing specific keywords related to payments and procurement, forwarding copies to an external address on a domain registered six weeks earlier.

The alert confidence was not high. The forwarding rules could have been set by the account owner for a legitimate purpose. The external domain, while recently registered, was not on any threat intelligence list. The account had not shown any other anomalous behaviour. Every individual indicator was explainable. Taken together, they described something that looked a lot like preparation for a BEC attack.

The options were straightforward. We could wait for a higher-confidence signal, continue monitoring, and potentially catch the attacker in the act of attempting the fraud. Or we could act now, remove the forwarding rules, lock the account pending investigation, and accept that we might be wrong. Waiting preserved the investigation opportunity. Acting early protected the business.

We contained. The forwarding rules were removed. The account was secured and the owner interviewed. The account owner had not set the rules. Their credentials had been compromised via a phishing email two weeks earlier, and the attacker had been quietly monitoring their inbox ever since. There was an invoice due the following week for a significant amount. The attacker had not yet made their move.

The outcome was not a complete investigation with confirmed attribution and a tidy incident report. The outcome was that the fraud did not happen. The attacker’s window closed before they used it. That is the kind of win that does not look impressive in a metrics dashboard, because nothing bad occurred. Inside the team, we knew exactly what had been avoided.

Running a SOC is about making decisions when visibility and control are incomplete. Every other part of the job exists to support that moment.

The SOC is not the last line by design. It becomes the last line by reality.

Leave a comment